|

| Generated using Microsoft Designer (first attempt only) |

Most Business Intelligence platforms can solve similar problems, but how they do it is different. It's usually a mistake to try to implement a solution the exact same way as you would in another platform, just because that is what is familiar to you.

I explained the premise of this series of posts, starting here, with Power Query: Qlik developer primer for what's different in Power BI: Power Query

This is the first of two posts seeking to orient experienced Qlik developers with Power BI data modeling. I'll compare and contrast to try to get you started quickly with Power BI.

(Here is part 2.)

Designing the Data Model

Star Schemas

A star schema is a common data modeling technique that consists of a fact table surrounded by dimension tables, each with a one-to-many (1:m) relationship to the fact table. This structure allows for fast and efficient querying and analysis of the data and a small data footprint.

In general, star schemas are considered the best practice for both Power BI and Qlik, as they provide optimal performance and simplicity. Here's a good reference for Power BI data modeling, which is also a decent primer for dimensional modeling, in general: Understand star schema and the importance for Power BI

Multiple Fact Tables with Shared Dimensions

Many business use cases involve using data from multiple fact tables, often with some dimensions shared and others not. A common example is detailed actual fact data and more aggregated quota or forecast data, ex., available only at the month and salesperson granularity. Then what?

In Qlik, modeling this data requires additional steps: creating a link table (contains the keys of the shared dimensions) or concatenating (unioning) the fact tables. Qlik can't handle multiple shared dimensions between two fact tables, so you must avoid the constraint by either combining the shared dimensions -- a link table -- or combining the fact tables -- a concatenated fact table.

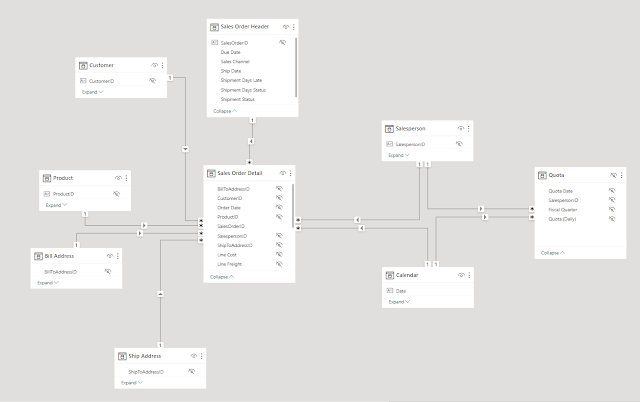

In Power BI, however, these tables can be used without any special consideration and achieve good performance. Power BI can handle multiple stars in a single Dataset and automatically generate the appropriate queries. As a result, there is less platform-specific data modeling -- what is good in Power BI is good for general dimensional modeling or querying with SQL. If you're curious, this is demonstrated here: Handling MULTIPLE fact tables in Power BI

|

| Two fact tables with two shared dimensions in Power BI, no problem |

Link tables and concatenated facts do work in Power BI, but aren't necessary and may have worse performance than multiple stars. Using multiple stars can also result in a smaller footprint (compared to concatenated fact) or less snowflaking (compared to a link table).

Anecdotally, Qlik is more forgiving with performance of the unconventional data models it requires. m:1 relationships perform better than m:m in Power BI, whereas Qlik does not seem to care (or notice, which isn't always desired). Where this frequently rears its head is when converting Qlik solutions with link tables to Power BI. The m:m relationship between the fact tables and link table spawns two Power BI queries whose results must be merged, which can be slow. Counterintuitively (because it has so many more rows and higher cardinality keys than the typical Qlik link table), we have actually seen the Puppini bridge perform better in Power BI, likely because the relationship cardinalities avoid the m:m problem.

Splitting the Data Model (Dataset) from Reports

In Qlik, the back end (data model) and front end (sheets and visualizations) are one and the same, as parts of the same app binary file. This means that each Qlik app has its own data model and cannot be shared or reused by other apps. Binary loading a data model to reuse it doubles the footprint of the data model on the server and does not include metadata like calculations.

Power BI enables a developer to manage Datasets (tables, relationships, calculations) independently from Reports (pages, visualizations, report-specific calculations). This separation has several advantages, such as:

- Multiple people working on the same solution at the same time, because the back end and front end are separate files

- Reusing a single Dataset for multiple Reports or scenarios

- Developing new Reports quickly and consistently using existing Datasets

- "Thin" reports (using remote data) can be developed and saved quickly because they are so small

- Centralizing Row-Level Security (RLS) rules across many use cases

- When Direct Query is used (live SQL option that is nascent in Qlik), there is no penalty for modeling additional data in terms of storage or refresh time, because queries only run on demand and return chart-specific results

A Power BI Dataset can also be used to Analyze in Excel, enabling people to self-serve through a pivot table-like interface, if that is their comfort zone.

Performance Tuning Tips

Regardless of the platform or data modeling technique used, the best practices that can help improve the performance of in-memory Datasets in Power BI are basically the same as in Qlik. These include:

- Using tall and narrow tables, which means having more rows and fewer columns

- Removing unused fields, unneeded rows, or unnecessary granularity of field values

- Aggregating the data when possible or filtering out unneeded data to reduce the number of rows

- Using low cardinality joins, which means having fewer distinct values in the join keys

Creating the Data Model

Data Model Relationships

Creating Relationships

In Qlik, the data model structure is inferred based on field names: if two tables have fields with the same name, they are assumed to be related. If you want to change the data model structure, you must change the field names. And once you are in the user interface, there is no differentiation between a field in one table and a field with the same name in another table.

In Power BI, there is an extra step to define relationships and join directions. The field names do not have to match across tables, and you always refer to fields using table qualifiers (e.g., Table[Field]). This gives you more control over the data model structure, can make it easier to debug, and can make some logic more explicit and easier to understand, ex., count distinct of customer key in the fact table is a count of active customers, and the query does not need to consider the customer table or join at all.

You also need to specify the direction of the relationships (single or bidirectional), which affects how filters and calculations propagate across tables. (We will talk more about the behavior of join directions in the next post.)

Relationships supported

In both platforms, you can only create relationships based on equality conditions (e.g., TableA[FieldA] = TableB[FieldA]) on just one field. If you need to create relationships based on other conditions (e.g., composite keys, ranges, inequalities), you need to resolve them in Qlik Load Script/Power Query or upstream of that. The one exception to this is that Qlik can resolve its own composite keys by automatically generating a synthetic key and table, which as a practice I never do (controversial, I know).

In Qlik, you can only create one relationship between two tables. If you need to use different relationships for different scenarios, you may need to use set analysis, alternate states, and/or island tables to modify or isolate your selections.

In Power BI, you can create multiple relationships between two tables, but only one can be active at a time. The active relationship is used by default for filtering and calculations. You can use DAX to override or create relationships dynamically, but this may add complexity or overhead to your data model. In my experience, this is not commonly used.

Relationship cardinality

In Qlik, the metadata to understand join cardinality is available (ex., "Has Duplicates"), but requires work on your part to find potential issues like unintended many-to-many relationships.

In Power BI, when you create a relationship between two tables, the cardinality is detected automatically and displayed as part of the relationship properties. This can help you verify that the relationship is correct and consistent with your source data and business logic. Sometimes, you may find that the cardinality is different from what you expected, which can alert you to potential issues. This has happened to me before because an assumption I made about a source table turned out to be wrong.

|

| Dang. |

Data Model Metadata

In Qlik, data types are detected automatically based on what has been loaded into a field. Qlik generally doesn't notice or care what data types exist. For example, Qlik wouldn't alert you to the fact that you associated a text field with a numeric field.

In Power BI, data types can be set (although they are usually inferred from sources), to optimize storage and performance. Unexpected values may not be loaded or generate error values. You could see this as a good thing or a bad thing: it might be more effort but also may alert you to data quality issues.

In Power BI, you can designate which tables are date tables, which enables time intelligence functions to work. These functions allow you to perform calculations based on time periods (e.g., year-to-date, month-over-month) or comparisons (e.g., same period last year).

In the next post, I will cover the behavior differences of the data models, plus some other features unique to one platform or the other.